We rarely stop to think about how extraordinary language is. Every spoken word requires our brain to turn meaning into movement, monitor the body, and adjust on the fly—all in a fraction of a second. For NYU Shanghai Associate Professor of Neural and Cognitive Sciences Tian Xing’s team, that makes language the ideal vehicle to study the brain itself. “We use language as a window into understanding how the brain works,” Tian said. In three recent papers published in Communications Biology, eLife, and NeuroImage, his team examined how speech is produced, corrected, and socially adapted, building a broader picture of the motor system’s role in human cognition.

Rethinking the route from thought to speech

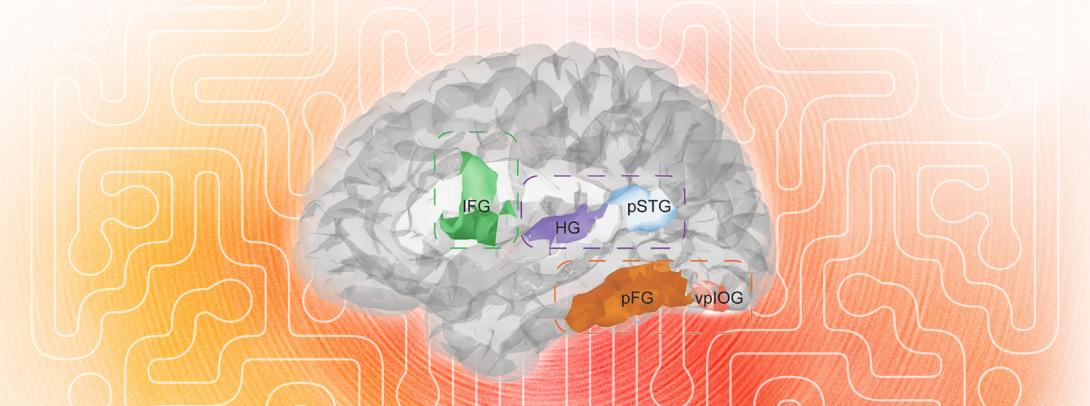

The first study, published in Communications Biology tackles how speech happens. Traditional models have often treated speech production as a reverse version of speech perception. To test that assumption directly, the researchers used stereotactic intracranial EEG (sEEG), the most advanced method available in human research – an invasive but highly precise technique that records neural activity from electrodes placed inside the human brain. Participants read single Chinese characters aloud while the team tracked when different brain regions became active. The results showed that activity in the inferior frontal gyrus, a motor-related region, preceded activity in the posterior superior temporal gyrus, an auditory region. The finding challenges the fifty-year common assumptions in the field and suggests that speech production may not simply replay a sound-based route in reverse, but may involve earlier motor encoding than had long been assumed.

Why correction depends on action, not just hearing

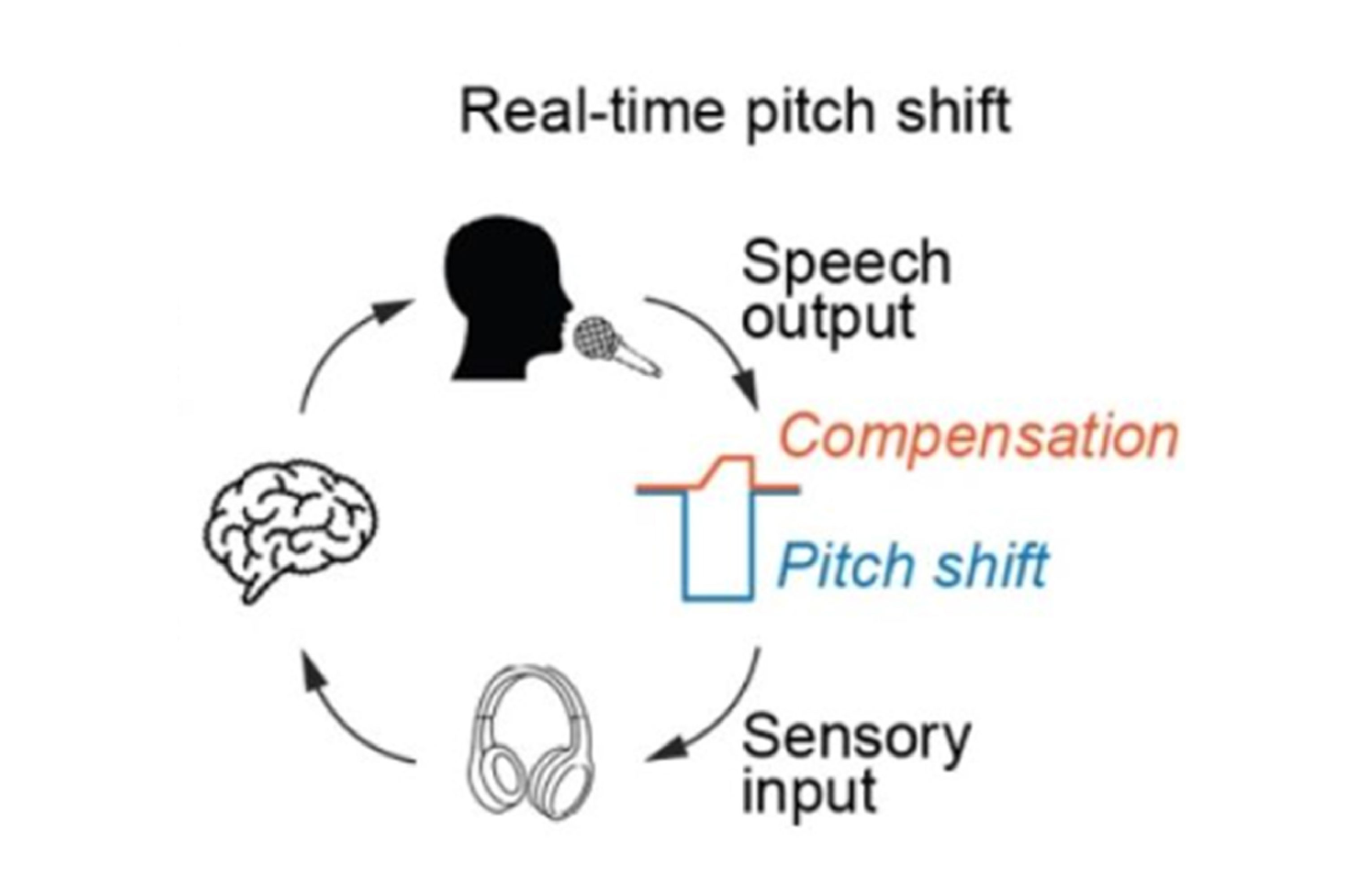

The second study, published in eLife, turned to learning in real time. When people hear themselves through headphones, for example, they automatically alter their voice to compensate for any shift in pitch. But what actually drives that learning from one utterance to the next? Is it the sensation of hearing something wrong, or the motor adjustment made in response? Across five experiments, the team separated these two possibilities. They found that adjustment in speech behavior was predicted not by sensory error, but by the speaker’s own motor compensation. In other words, what mattered most was not simply noticing that something sounded wrong, but how the brain’s motor system corrected it. The study also extended the question from isolated vowels to connected speech and found that this rapid, trial-by-trial learning was constrained by linguistic structure. An adjustment made on one syllable influenced the next syllable when the two belonged to the same word, but not when they belonged to different words. The study collectively demonstrates that rapid speech learning is co-regulated by our motor and linguistic systems.

Why we start sounding like one another

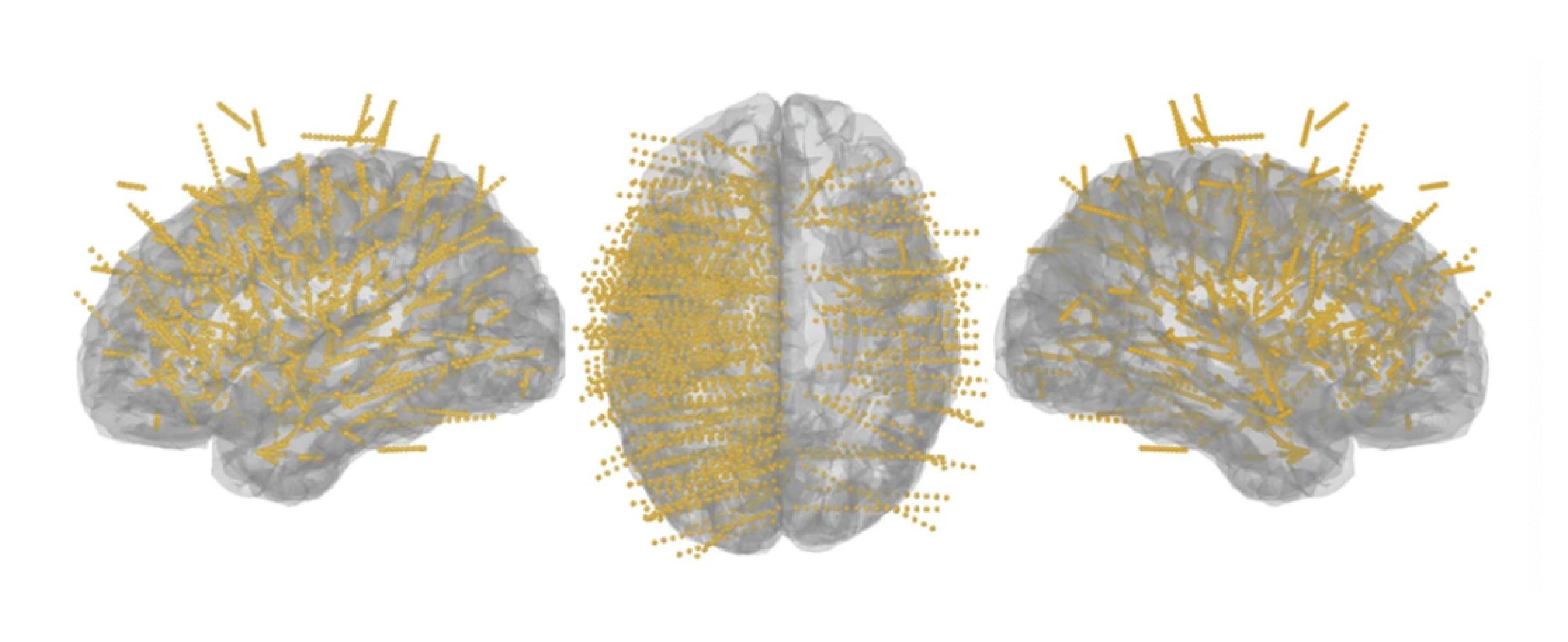

The third study, published in NeuroImage, moved from speech correction to social adaptation. In everyday conversation, people naturally begin to mimic one another’s speech patterns and accents, most of the time without noticing, a phenomenon known as phonetic convergence. To understand why, the researchers combined a shadowing task with EEG and a novel speaking oddball paradigm. Male participants first repeated words spoken by a female speaker, then completed listening-and-speaking tasks designed to test whether brain responses reflected memory of the speaker’s voice or predictions grounded in the listener’s own motor system. The results pointed to the latter. After convergence behavior occurred, motor-based predictions enhanced sensitivity to listener-matched features, and stronger neural effects were associated with greater vocal convergence. Rather than treating imitation as a purely auditory phenomenon, the study suggests that the motor system helps drive this subtle form of social learning.

Taken together, the three studies point in the same direction. Whether the question is how speech begins, how it is corrected, or how it adapts in social interaction, the motor system appears to do far more than simply execute commands at the end of the process. “The motor system is not just a passive output process,” Tian said. “It is part of the larger cognitive process itself.”

The three recently published studies are examples of the research program of the Speech, Language, and Neuroscience Group directed by Tian at NYU Shanghai. Together with research on mental disorders and health, the group is on the track of using speech and language to understand, develop, and potentially protect and heal the brain. By taking a collective view of the brain, body, and mind in the context of speech and language, the importance of a shift in perspective may extend well beyond basic theory. Tian said his team hopes to continue using cutting-edge tools, including intracranial recordings and even single-cell methods in humans, to study what language reveals about brain functions, while also exploring applications in medical conditions closely tied to communication, including autism, anxiety, and depression.